Xiyuan (Cyrus) Liu

Staff Software Engineer · Robotics, Autonomous Driving and AI

I build the software that makes autonomous vehicles think. Currently at Bosch developing AI-driven systems for autonomous vehicles. Previously at Waymo, Motional, Nvidia, and Aurora. M.S. from CMU Robotics Institute.

Building autonomous vehicles

from A* search to AI planning.

Staff Software Engineer — Behavior, AI-based Planning

- ·Defined software architecture and modular design for the classical L4 behavior planning stack, establishing integration contracts across prediction, trajectory generation, and control interfaces.

- ·Identified data generation as a foundational gap for E2E ML planning and led the pipeline from concept to org-wide adoption — ahead of broader team prioritization.

- ·Pioneered a GPU-based RL simulator for closed-loop motion planning — now a core project at the Sunnyvale site.

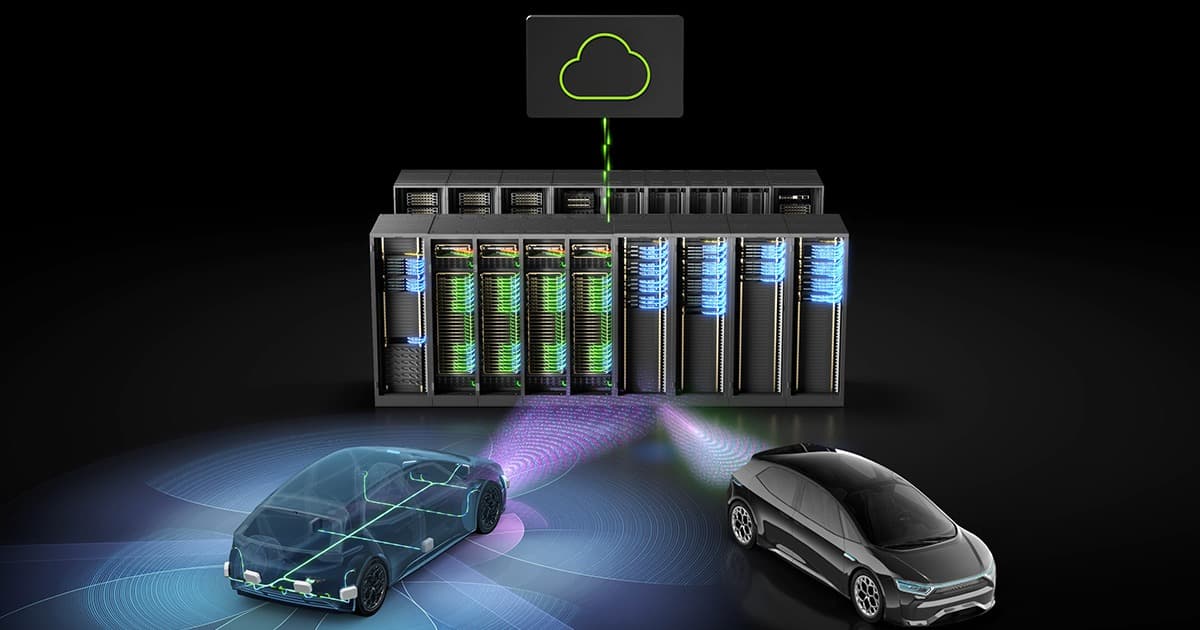

- ·Architected federated learning infrastructure across Azure and Tencent Cloud, positioning Sunnyvale as the technical lead for global ML within Bosch.

- ·Scaled a RAG-based quality management platform from hackathon prototype to 100+ weekly global users with $0 budget, driving AI transformation across organizational boundaries.

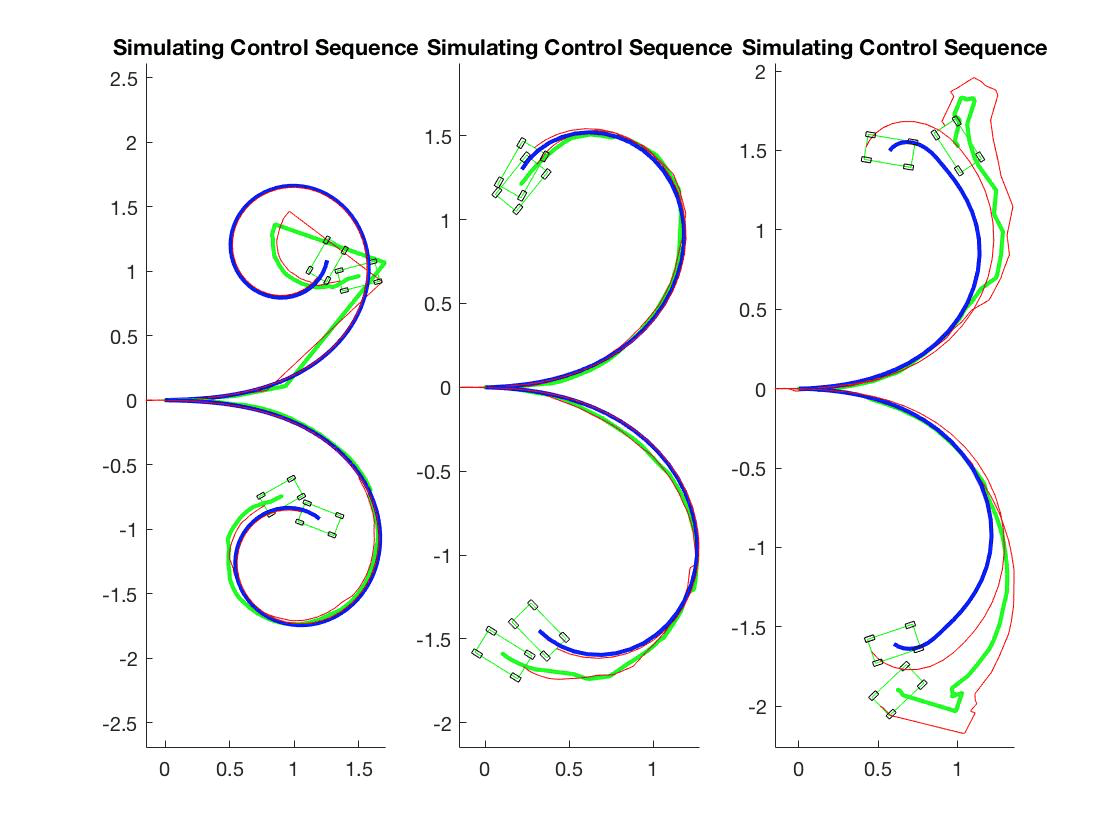

Staff Software Engineer IC5 — Prediction Planning & Control

- ·Rapidly onboarded to the L2++ planning stack; overhauled the path optimizer and built an iLQR rapid-prototyping and debugging toolchain, demonstrating the ability to drive architectural impact across an unfamiliar codebase.

- ·Implemented lateral behavior improvements for urban driving, including road edge detection and avoidance logic.

Software Engineer — Motion Generation

- ·Developed foundational search-based algorithms — including reasoning interfaces, cost functions, and graph construction — and modularized the sampling pipeline for scalability and maintainability.

- ·Built evaluation and debugging tooling that enabled comprehensive assessment of search quality and effectiveness.

- ·Architected the next-generation motion planner with modularized component selection; shaped org-wide technical direction and received the Onboard Software Excellence Award.

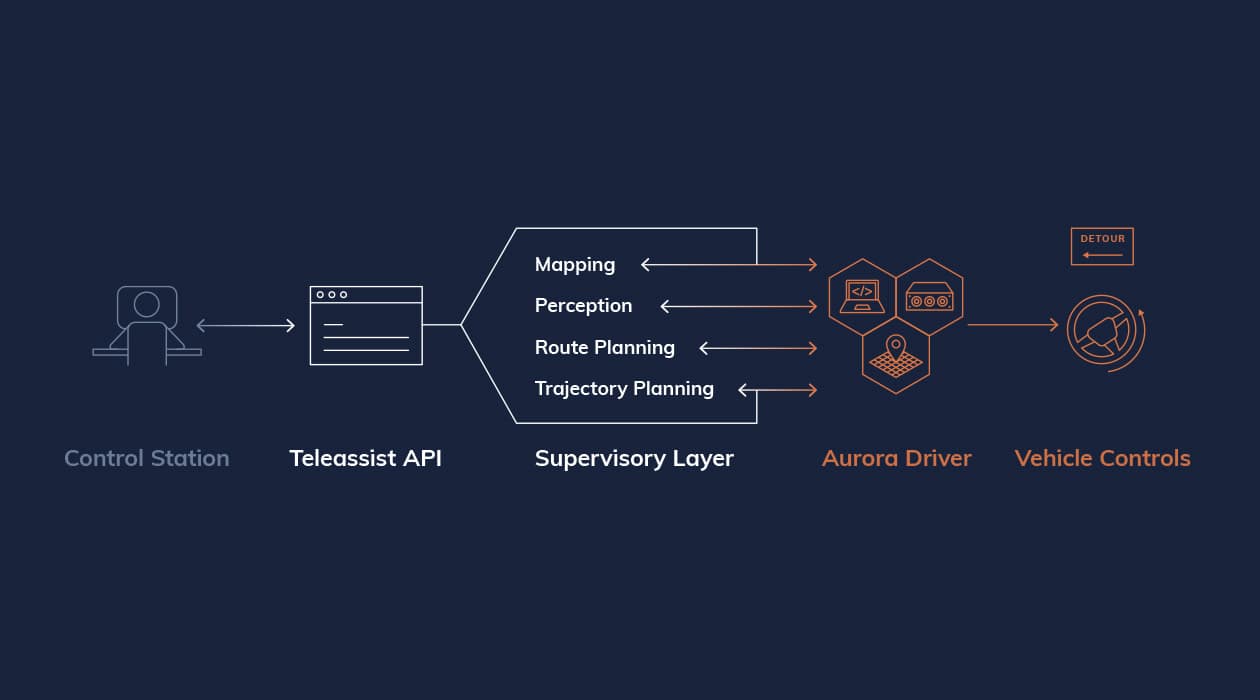

Software Engineer — TeleAssist

- ·As primary developer, designed and shipped the first fully operational tele-operation MVP, integrating closely with the perception stack, autonomy stack, and user control systems.

Senior R&D Software Engineer — Motion Planning & Control

- ·Enhanced graph generation achieving 40x speedup; validated across 100+ vehicle fleet in Las Vegas.

- ·Led optimization pipeline architecture and spearheaded speed planning transition from prototype to fleet deployment in 3 months.

Software Engineering Intern — Perception & Localization

- ·Built a 3D point cloud filter for semi-static object removal to improve sidewalk robot localization. SLAM maps captured during mapping runs contain transient objects — parked cars, pedestrians — that are absent during task runs, causing localization drift.

- ·Implemented a visibility-based range image filter: projects consecutive LiDAR frames into spherical range images and flags points in the prior map that are occluded by closer observations in the current scan as transient. Effectively removes dynamic objects such as pedestrians where inter-frame point cloud differences are high.

- ·Identified the open challenge posed by semi-static objects (e.g. parked cars) that appear static within a run but disappear between runs — a problem class requiring longer time-horizon map maintenance beyond frame-to-frame comparison.

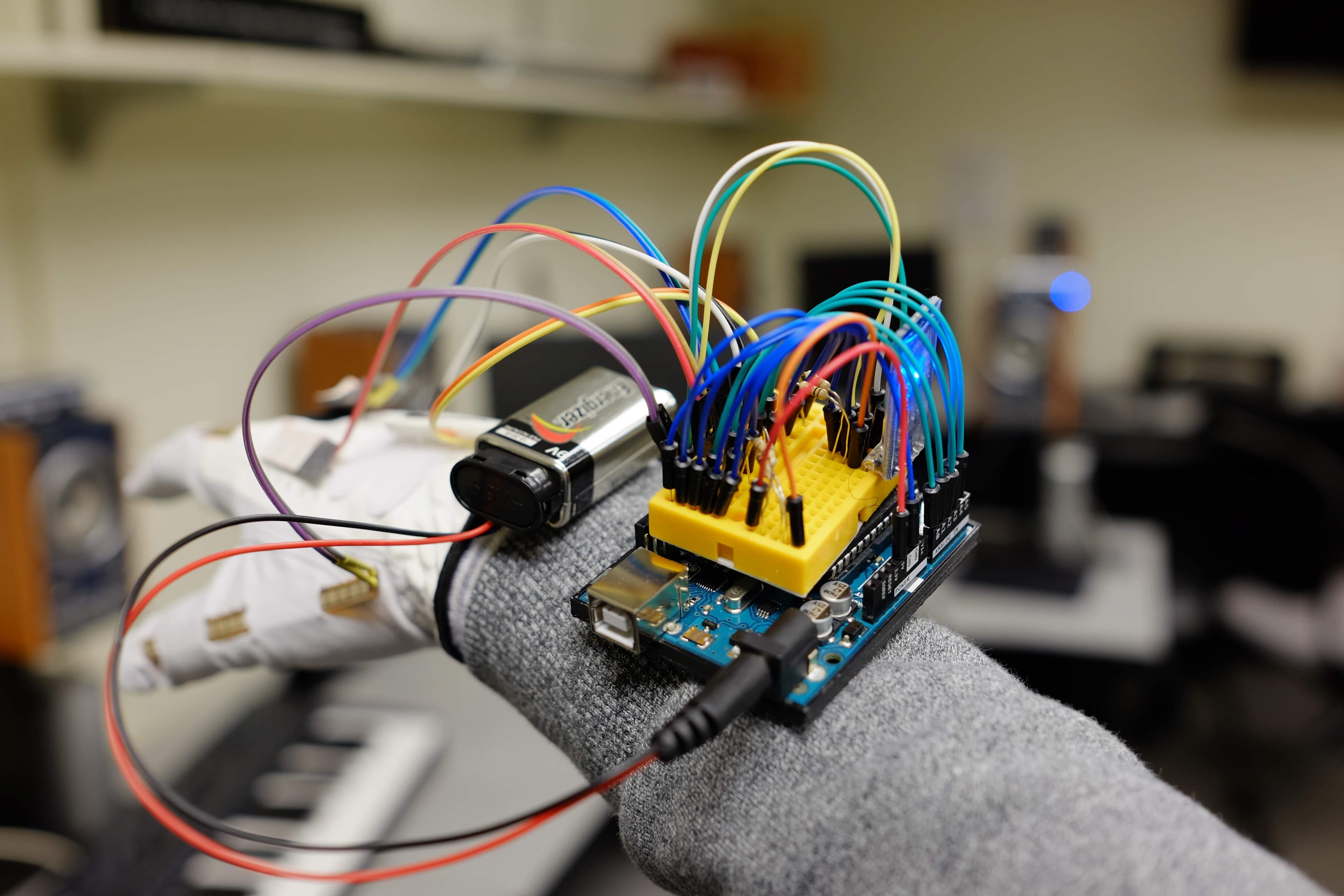

M.S., Robotic Systems Development

- ·Robotics Institute, School of Computer Science.

- ·Focused on autonomous systems, motion planning, and ML-based robotics.

B.S., Computer Science — Summa Cum Laude

- ·Graduated Summa Cum Laude with highest honors in Computer Science.

Projects & experiments

things I built for fun.

Dungeon OS

liveCLI-based D&D engine where Claude acts as the Dungeon Master — handling storytelling, bookkeeping, and NPC management via LLM tool calls.

I’m a huge fan of Baldur’s Gate 3, even though I’ve never played D&D in real life (and don’t actually know anyone who does). With the AI boom, an AI Dungeon Master seemed like the logical next step. With a bit of CLI skill and some APIs, you don't even have to code a DM—Claude can just *be* the DM. Storytelling, bookkeeping, and NPC management, all handled. It’s playable, though I have no idea if it’s actually ‘good’ D&D. But hey, it’s a start.

PR Monitor

liveAggregated PR review dashboard for engineers with multiple GitHub accounts across organizations, with priority sorting and noise filtering.

One of the best lessons I learned from my mentors at Waymo and Google: PR reviews should be priority zero. It should almost always be the first thing you do as soon as you’re requested; that’s how you keep development momentum alive. However, I’ve somehow ended up with five GitHub accounts across four different organizations, and it’s a pain to filter through the noise GitHub throws at you. Back at Google, there was a handy internal tool for this, but I haven’t found anything similar for the outside world. Since we’re in the age of AI, I figured it should be trivial to just build it myself.